Markov Chains: A Practitioner's Refresher

Transition matrices, n-step probabilities, and stationary distributions — the mathematical scaffolding behind regime-switching models, credit migration matrices, and any system where the next state depends only on the current one.

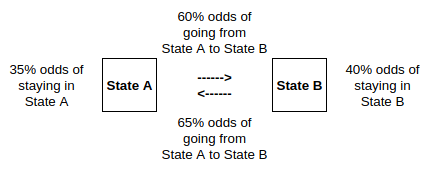

Markov Chains appear throughout quantitative finance: regime-switching models for asset returns, credit rating migration matrices, Hidden Markov Models for signal detection, and any system where the probability of transitioning to the next state depends only on the current state. This post walks through the core mechanics — transition matrices, multi-step probabilities, forecasting, and stationary distributions.

Definition

A Markov Chain is a stochastic process describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event.

Formally:

- Let be a random variable taking values in

- Let denote the transition probability from state to state

- is a Markov Chain if

The state dynamics are fully specified by the transition matrix:

Since the elements of each row must sum to unity:

State Vectors

Let denote the -th row vector of the identity matrix. Let denote a row vector that equals when the state is equal to :

The expectation of is a vector whose -th element is the probability that :

We infer that , or more generally , and since follows a Markov Chain:

In plain English: when , selects the -th row of the identity matrix. Multiplying by the transition matrix extracts the corresponding row of transition probabilities. Because the chain is Markov, only the current state matters — all the history is irrelevant. It’s really just a structured way to look up the right transition probabilities.

Two-Step Transition Probabilities

The probability that given is:

This is the element of . Intuitively, to get from state to state in two steps, we sum over all possible intermediate states — weighting each path by the product of its transition probabilities.

N-Step Transition Probabilities

In general, the probability that given is the element of :

We locate the appropriate entry in the transition matrix raised to the -th power.

Forecasting Transitions

Assume we have received information up to date . Let

denote the conditional probability distribution over the state space. Since , we infer that

If contains no leading information, the forecast state distribution is:

The one-step-ahead conditional distribution is the current distribution multiplied by the transition matrix. All the information is already embedded in .

Stationary Distributions

A distribution is stationary if it satisfies — the forecast distribution is the same as the current one. If the Markov Chain is ergodic, the system

has a unique solution, where denotes the vector of ones. This stationary distribution is the long-run proportion of time spent in each state, regardless of the starting point — a property that makes it central to equilibrium analysis in credit models, economic regime models, and any setting where we care about steady-state behaviour.

Where This Shows Up in Practice

The machinery above is the scaffolding behind several standard tools in quantitative finance:

- Regime-switching models for asset returns (Hamilton, 1989) treat the economy as a Markov chain over latent states — typically a “high-volatility” and “low-volatility” state — and use maximum likelihood or Bayesian inference to back out the transition probabilities from observed returns.

- Credit rating migration matrices published by rating agencies are transition matrices for a Markov chain over rating categories. The n-step probability calculation gives the distribution of likely ratings n years from today, which feeds directly into credit portfolio risk models.

- Hidden Markov Models extend the framework by treating the state as unobservable and the observed data (returns, volumes) as emissions from the hidden state. HMMs are used for trade classification, market microstructure analysis, and anomaly detection.

In all three applications, the stationary distribution tells you the long-run proportion of time the system spends in each state — a quantity that matters for setting capital reserves, computing unconditional risk premia, and understanding the base-rate behaviour of the system you’re modelling.